【新品】カルヴィデュオ 大人気モーヴシルヴェストル

(税込) 送料込み

商品の説明

エルメス正規店で購入しました。

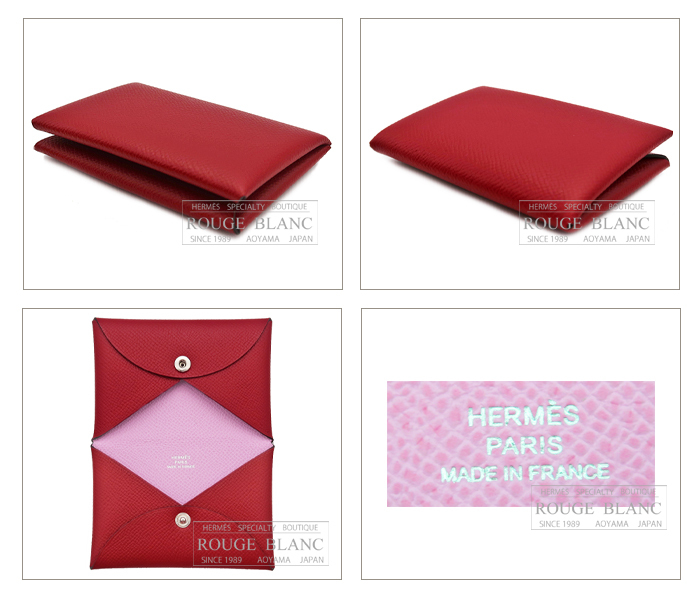

カードケースとコインケースが一緒になっているタイプです。

なかなか店頭になく、人気色なのでお探しの方は是非!

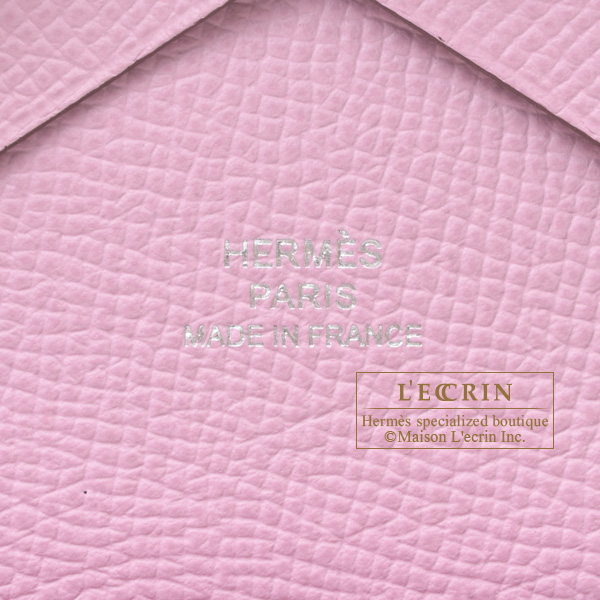

● カルヴィデュオ

●色: モーヴシルヴェストル

●付属品:箱・リボン

●新品・未使用

●U字刻印

●仕様:スナップボタン開閉式

コインケース×1、カード入れ×1

●サイズ:約W 10.5cm×H 7.0cm×D 1.5cm

※すり替え防止の為、返品・交換は致しかねます。

ご了承ください。

バスティア、ベアンスフレ、ヴァンキャトル、ツイリーも出品しています。

いかがでしょうか。商品の情報

| カテゴリー | レディース > 小物 > 折り財布 |

|---|---|

| ブランド | エルメス |

| 商品の状態 | 新品、未使用 |

新品未使用 エルメス カルヴィデュオ モーヴシルヴェストル

☆値下げ エルメス カルヴィデュオ モーヴシルベストル 新品未使用

新品未使用】HERMES カルヴィデュオ モーブシルヴェストル シェーブル

新品 エルメス カルヴィデュオ モーヴシルヴェストル 超人気の 38647円

☆安心の定価販売☆】 エルメス 最大49%OFFクーポン カルヴィデュオ

2023年最新】モーヴシルヴェストル の人気アイテム - メルカリ

新品未使用】HERMES カルヴィデュオ モーブシルヴェストル シェーブル

新品未使用】HERMES カルヴィデュオ モーヴシルヴェストル シェーブル

新品】カルヴィデュオ 大人気モーヴシルヴェストル-bydowpharmacy.com

人気の春夏 【新品未使用】エルメス カルヴィデュオ モーヴ

新品?正規品 エルメス カルヴィデュオ モーヴシルベストル ピンク 新品

HERMES エルメス カルヴィ デュオ モーヴシルベストル ヴォー

おまけ付き【新品未使用】エルメスHERMES カルヴィデュオ モーヴ

HERMES エルメスカルヴィデュオ モーヴシルベストル | wic-capital.net

楽天市場】新品 HERMES エルメス カルヴィ デュオ コインケース 小銭

カルヴィデュオ モーヴシルベストル | labiela.com

Hermes - 新品未使用 エルメス カルヴィデュオ モーヴシルヴェストルの

Hermes - エルメス コインケース カルヴィ デュオ モーヴシルベストル

エルメス カルヴィ デュオ モーヴシルベストル ヴォーエプソン

HERMES エルメスカルヴィデュオ モーヴシルベストル 今年人気の

激安人気新品 カルヴィデュオ モーヴシルベストル エプソン エルメス

新品 エルメス カルヴィ カードケース 名刺入れ バイカラー ルージュ

新品未使用】HERMES カルヴィデュオ モーブシルヴェストル シェーブル

カルディデュオ モーヴシルベストル エルメス | eclipseseal.com

楽天市場】HERMES エルメス カルヴィ デュオ モーヴシルベストル

新品未使用】HERMES カルヴィデュオ モーブシルヴェストル シェーブル

新品未使用】カルヴィデュオ エルメス HERMES カードケース 小銭入れ

新品未使用 エルメス カルヴィデュオ モーヴシルヴェストル

驚きの価格が実現!】 HERMES カルヴィデュオ モーヴシルヴェストル

Hermes - 新品未使用 エルメス カルヴィデュオ モーヴシルヴェストルの

2023年最新】カルヴィデュオの人気アイテム - メルカリ

エルメス カルヴィ デュオ モーヴシルベストル ヴォーエプソン

楽天市場】新品 HERMES エルメス カルヴィ デュオ コインケース 小銭

HERMES エルメス カードケース カルヴィ デュオ モーヴシルベストル

エルメス カルヴィ デュオ モーヴシルベストル ヴォーエプソン

HERMES ガルヴィデュオ 新品 モーヴ | labiela.com

新品 エルメス カルヴィ カードケース 名刺入れ バイカラー ルージュ

激安人気新品 カルヴィデュオ モーヴシルベストル エプソン エルメス

楽天市場】HERMES エルメス カルヴィ デュオ モーヴシルベストル

エルメス カルヴィデュオ モーヴシルベストル 新品未使用 | fpfs.com.py

商品の情報

メルカリ安心への取り組み

お金は事務局に支払われ、評価後に振り込まれます

出品者

スピード発送

この出品者は平均24時間以内に発送しています