copine ☆プロフを一度お読み下さい 様専用❤️Nikon D5500

(税込) 送料込み

商品の説明

❣️Nikon D5500❣️

ダブルレンズキットの紹介です。

この商品のおすすめポイント

❤️ダブルレンズで18-200mmまでの広範囲の撮影可能♪このセットで近距離〜望遠までの撮影可能❤️

❤️2つのレンズは共に手ぶれ防止機能VR付♪

❤️大きな傷や擦れも特になく、状態はとっても綺麗❤️

❤️取扱説明書付き❤️

❤️SD(32GB)付き、Wi-Fi内蔵で、撮った写真を直ぐスマホに送れる❤️

❤️すぐに使える全部込みセット❤️

❤️お届けから1ヶ月保証付き❤️

❤️全国送料無料❤️

ご覧頂きありがとうございます☺︎ コメント無し&即購入歓迎です。

商品説明

✨シンプルな可愛いデザインなのに高機能がたくさん♪

超高画質の2416万画素に加え、SCENCEモードやeffectモードがあり、感動的な綺麗な写真や思い通りの感動的な写真が撮れる♪

Wi-Fi機能も内蔵で撮ったらすぐにスマホに送れます。

写真をすぐにスマホに送る事ができるので、SNSのアップがより一層楽しくなる♪

可動式液晶モニター、ライブビュー撮影が可能なので、画面を見ながらの自撮り撮影が出来る♪

高機能が満載の一眼レフです♪

✨タッチパネル機能搭載

✨フルHD動画撮影可能

✨470gと軽量、小さくコンパクトで持ちやすい

《動作状況》

各動作はとてもスムーズで、

通常撮影など基本動作は確認済みで、

コンディションは良好。

センサーに傷があり小さな線の写り込みがありますが、

程度は小さく気になら無い程度です。

(実際の写真を10枚目にあります。)

《ボディ、レンズ外観》

傷や擦れがほぼ無く状態はとっても綺麗です。

《レンズ内》

レンズ・ファインダー内は一眼カメラ特有の

チリ等の混入のみで通常撮影時に影響ありません♪

⭐️セット内容⭐️

・カメラ本体

・標準レンズ(AF-S 18-55mm VR)

・望遠レンズ(AF-S 55-200mm VR)

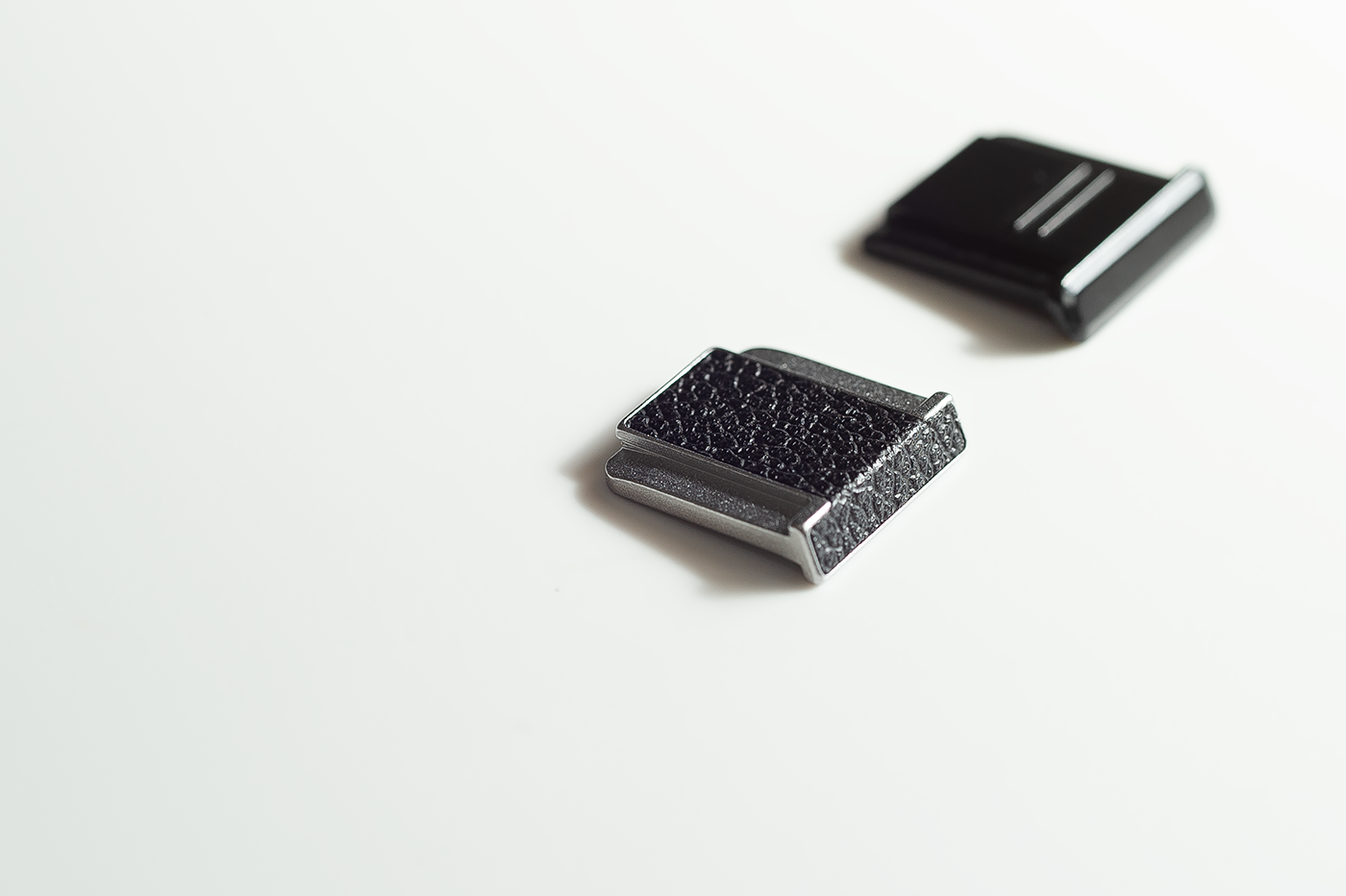

・レンズ前後キャップ

・ボディキャップ

・バッテリー

・充電器セット

・新品SDカード(32GB)

・☆1ヶ月保証☆

・取扱説明書

更に!

・ブロアー

・ファイバークロス

・レンズクリーニングペーパー

お気軽にコメント下さい。

※他にもたくさんのカメラを出品しています。

(こちらをクリック)

↓↓↓↓↓

#SakiCameraKawaii

-060506商品の情報

| カテゴリー | 家電・スマホ・カメラ > カメラ > デジタルカメラ |

|---|---|

| ブランド | ニコン |

| 商品の状態 | 目立った傷や汚れなし |

SONY a6400 新品未使用

Nikon COOLPIX A aps-cセンサーコンデジ

SONY a6400 新品未使用

FUJIFILM X-T20 本体

最高級のスーパー LAOWA (ラオワ) 10mm F4 Cookie (フジフイルムX用

Nikon COOLPIX A aps-cセンサーコンデジ

未使用品】 ✨機能充実✨Canon KissX9i ズームキット スマホ転送可能

SONY a6400 新品未使用

SONY a6400 新品未使用

SONY a6400 新品未使用

最高級のスーパー LAOWA (ラオワ) 10mm F4 Cookie (フジフイルムX用

⭐︎値引き⭐︎コート フレイアイディー 価格比較 ednaadanuniversity.org

最高級のスーパー LAOWA (ラオワ) 10mm F4 Cookie (フジフイルムX用

SONY a6400 新品未使用

10点セット ニナファームジャポン サンテアージュ オキシリア SanteAge-

爆売り! Justin Davis ジャスティンデイビス CHARLES ブレスレット

NIALL O´FLYNNデザイン ワイヤーチェア-

クロムハーツ*ニット帽Chrome Hearts クロムハーツ 【2023年製 新品

SONY a6400 新品未使用

⭐︎値引き⭐︎コート フレイアイディー 価格比較 ednaadanuniversity.org

爆売り! Justin Davis ジャスティンデイビス CHARLES ブレスレット

ビカクシダ☆厳選美株☆ワンダエ大株☆プラティケリウム ワンダエ

クロムハーツ*ニット帽Chrome Hearts クロムハーツ 【2023年製 新品

爆売り! Justin Davis ジャスティンデイビス CHARLES ブレスレット

10点セット ニナファームジャポン サンテアージュ オキシリア SanteAge-

爆売り! Justin Davis ジャスティンデイビス CHARLES ブレスレット

⭐︎値引き⭐︎コート フレイアイディー 価格比較 ednaadanuniversity.org

ビカクシダ☆厳選美株☆ワンダエ大株☆プラティケリウム ワンダエ

⭐︎値引き⭐︎コート フレイアイディー 価格比較 ednaadanuniversity.org

当店在庫してます! 9000D EOS Canon /EF stm f/1.8 50mm デジタル

ビカクシダ☆厳選美株☆ワンダエ大株☆プラティケリウム ワンダエ

Nikon D5500 詳細仕様 (By キンタロウ)

Nikon Dfをドレスアップ / BLOG / KISHIN

Nikon Z100‐400とCanon RF100‐500を使ってみた感想

デジタルならNikon Dfは最高の散歩スナップカメラかもしれない。|記憶

カメラの八百富|ニコンF ボディキャップのお話し 「そりゃないで

コムドット掲載写真集、文庫本 humanainteramericana.com

カメラの八百富|ニコンF ボディキャップのお話し 「そりゃないで

人気新品入荷 DC-TZ95 Protective - www.cascanueceschile.com

カメラの八百富|ニコンF ボディキャップのお話し 「そりゃないで

商品の情報

メルカリ安心への取り組み

お金は事務局に支払われ、評価後に振り込まれます

出品者

スピード発送

この出品者は平均24時間以内に発送しています